Physical AI Starts With Capture

GenAI can write code, summarize contracts, and generate marketing copy in seconds. However, when you ask it why Line 3 is running 12% slower than yesterday, it has nothing to say. The model is the same. The data isn’t there.

That gap, between what AI does with digital data and what it does with operational data, is the gap Physical AI exists to close. Moreover, closing it isn’t a model problem or a tooling problem. Instead, it’s a capture problem.

The models are here. The data isn’t. That’s the work ahead.

How We Define Physical AI

When we say Physical AI, we mean something specific, and the specificity matters because the industry uses the term loosely. For example, some vendors apply it to robotics. Others apply it to vision systems. We use it differently.

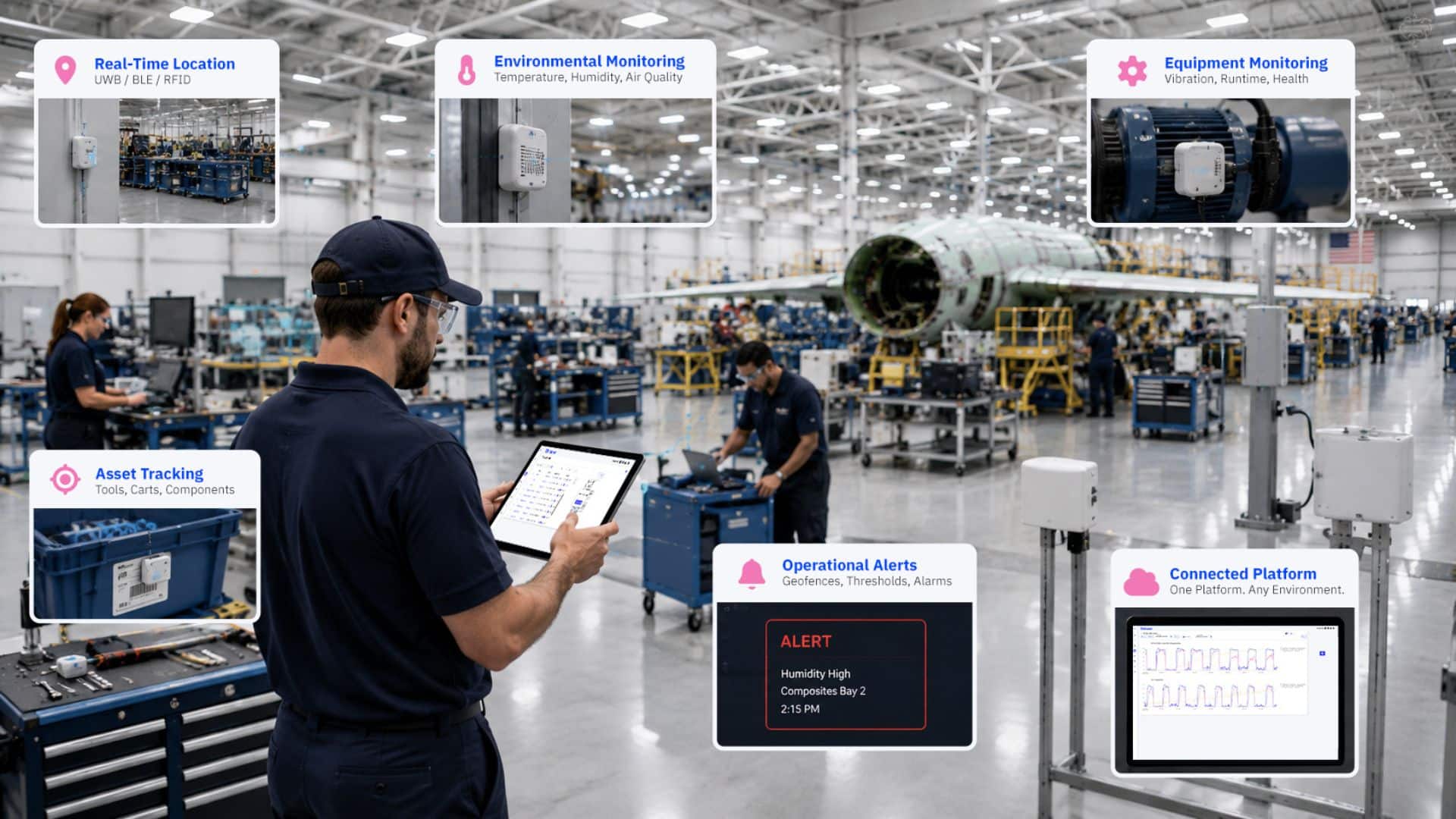

For us, Physical AI is artificial intelligence operating on real-time data from operational environments: factories, hangars, hospitals, ports, yards, and the other places where work involves people, equipment, and materials moving through real space. However, the defining condition is not the model or the use case. Instead, it’s the data source. If the AI is reasoning over operational data that comes from live capture in physical operations, that’s Physical AI as we mean it.

This framing leads us to a three-part stack: Capture, Learn, Act. The order is deliberate, and the order is also where most operational AI strategies break down. First, Capture means the sensors, networks, and APIs that turn physical space into structured data. Then Learn means whatever model you point at that data. Finally, Act means the operators and systems making decisions from what the model returns.

The reason we lead with Capture instead of Learn is straightforward. In our experience deploying across defense, aerospace, manufacturing, and healthcare, the model is almost never the bottleneck. Therefore, defining Physical AI as a model problem misses what actually slows organizations down.

AI Without the Floor Is AI Without Context

Large language models train on text, code, and structured datasets. As a result, they excel at pattern recognition in digital environments. However, manufacturing floors, defense hangars, shipyards, and hospital wings are not digital environments. They are physical, and physical environments do not generate data on their own.

Without sensors capturing operations in real time, GenAI cannot answer the questions operators actually run on. For example, where is the calibrated torque wrench right now? Has humidity in Composites Bay 2 exceeded specification in the last hour? Is the HVAC unit in Building 7 showing early signs of failure? Furthermore, how long has Work Order 4471 been sitting at Station 12?

These aren’t analytics questions. Rather, they’re the operational questions a shift runs on, and they require structured, real-time data from the physical world. That data exists only if something captures it.

The Missing Layer in Every AI Strategy

Most organizations chasing AI in operations start with the model. First, they license an LLM. Then they build a data lake and hire data scientists. However, they never build the foundation: the capture layer that turns physical operations into structured, AI-ready data.

The result is predictable. The AI works in the demo. Then it fails in production. The model is fine. Meanwhile, the data wasn’t there, wasn’t clean, or didn’t fit the questions operators actually ask.

Physical AI flips that order. First capture, then learn, then act. Without the front of the stack, nothing downstream works.

The Capture Layer

Thinaer is the Physical AI capture layer. Specifically, we deploy in the environments where capture has historically been hardest (including patented coverage of classified spaces), and we carry every sensing technology a site requires: BLE, RFID, UWB, GPS, LoRaWAN, Wi-Fi HaLow, plus 40+ sensor types across location, environmental, and equipment monitoring. Similarly, backhaul follows the same rule: wired, Wi-Fi, cellular, private 5G, or LoRaWAN, depending on what the building can support.

We contextualize every reading at capture (asset, location, process, timestamp), so teams never have to stitch them together later. The output is structured data that flows through MQTT and REST APIs, ready for any cloud, any model.

This is what “your environment decides” actually means. For example, a shipyard has RF constraints that rule out most radios, so UWB does the work. Meanwhile, the yard outside needs GPS. The classified bay needs BLE with patented coverage. The hospital wing needs environmental sensors for isolation compliance. Finally, the factory floor needs all of the above, plus machine utilization. As a result, it all flows through one platform. One Sonar instance. One set of APIs. One partner.

Customers never lock into a radio that doesn’t fit their next building, their next use case, or their next environment. The technology changes. The platform doesn’t.

Day One Value, Before Any AI Is in the Loop

Capture without action wastes the investment. Therefore, Sonar, our operational visibility application, delivers value the day the sensors go live: live maps, geofences, alerts, dashboards, and time-out tracking. Operations teams act on the data immediately, in real time, before any AI model enters the loop.

Then, when the AI does come into the loop, the data is already there.

What Happens When You Connect AI to Live Capture

Connect any model you choose (any cloud, any AI tool already in your stack) to a structured data feed from the floor, and the use cases compound. For instance, operators ask plain-language questions about facility status and get accurate, real-time answers. Furthermore, maintenance teams get predictive alerts that learn from actual equipment behavior, not synthetic baselines. Quality engineers also get root-cause analysis that correlates environmental conditions with defect patterns. Meanwhile, production managers see shift summaries that draw on real asset movement and utilization, not the spreadsheet someone updated at noon.

None of this is possible without the capture layer. Ultimately, the AI is only as smart as the data feeding it.

The Conversation Has Been Backwards

For two years, the industry has debated which model is best, which cloud to run it on, which vendor has the cleanest dashboard. However, those debates assume the data exists. In physical operations, it usually doesn’t.

The organizations that will lead the next decade of operational AI are not the ones with the most sophisticated models. Instead, they are the ones that solved capture first. They have a structured, real-time view of what is happening across their floors, hangars, yards, and wings, and they can point any model at it. That is the shift worth paying attention to. Not better AI, but rather AI with something real to work with.

Every Stack Has a Foundational Layer

Every wave of enterprise technology has had a foundational layer that determined what was possible above it. For example, cloud needed virtualization. Mobile needed app stores. Similarly, analytics needed the data warehouse. Now, Physical AI needs capture.

Once you build that layer, the rest of the stack becomes possible. Skip it, and the model will keep failing in production no matter how good it looks in the demo. Therefore, the interesting question for operations leaders right now is not which AI to buy. Rather, it is whether the data their AI will run on actually exists yet, and what it will take to make it exist at the resolution AI requires.

Ultimately, the next decade of operational AI plays out on the floor.